The Quirk Contract

With enough users of your LLM-powered system, every observable behavior (token-level formatting, specific hallucination patterns, a model’s tendency to hedge, even latency) becomes a hard dependency for someone downstream. This is why a “drop-in” model swap from GPT-4 to Claude to Qwen is never drop-in: you’re not replacing a model, you’re breaking a thousand undocumented contracts.

The Gall Seed Principle

Every robust multi-agent system in production evolved from a boring single-turn loop that worked. The teams that start by designing a seven-agent hierarchical planner-executor-critic-router-… almost always ship nothing. Start with one agent, one tool, one eval; then let complexity earn its place.

The Cleverness Tax

Debugging an agent is at least twice as hard as building it. So if you build the most sophisticated ReAct-style orchestrator you can imagine, by definition you’re not smart enough to debug it when it silently loops on tool call #14 in production. Ship the agent one notch below your ceiling.

The Eval Decay Principle

The same golden eval set, run repeatedly, becomes a worse measure of real progress over time: it leaks into prompts, gets overfit via hill-climbing, and stops catching the failure modes that actually matter now. Treat eval sets as perishable inventory: rotate, regenerate, adversarially augment.

The Benchmark Trap

When a metric becomes the target for your agent or fine-tuned model, it stops being a good metric. Optimize for routing accuracy on a fixed test set and your router learns the test set. Optimize for a reward model and you get reward hacking. Every agent KPI silently mutates into a specification of how to game it.

The Hallucination Confidence Paradox

An LLM is most confident in exactly the regions where it knows the least: rare entities, long-tail facts, niche domains. The surface polish of the output is uncorrelated with epistemic grounding, which is why “it sounded right” is the single most expensive phrase in GenAI deployment. Confidence is a UI feature, not a signal.

The Latent Space Mirage

An embedding is a lossy projection, not a meaning. Cosine similarity in your semantic search is the map; user intent, product context, and business logic are the territory. Every system that treats “high similarity score” as “correct answer” eventually gets caught when a near-duplicate vector returns something the user would never consider relevant.

The Framework Fog

LangChain, LlamaIndex, and every agent SDK promise “provider-agnostic” interfaces that invariably leak the quirks of whichever model you’re actually running: tokenizer differences, tool-call schema variance, streaming behaviors, stop-sequence handling. The abstraction saves you on day one and costs you on day ninety, when a production bug requires you to know exactly what the framework did to your prompt.

The Demo-to-Prod Chasm

The first 90% of your agent (the part that works in the notebook) takes 10% of the time. The remaining 10% (retries, timeouts, tool-call validation, structured output enforcement, cost controls, observability, red-teaming, rollback paths) takes the other 90%. Most “AI POCs” are optimistically 10% complete at their launch celebration.

The Hype-Horizon Asymmetry

We overestimate what LLMs and agents can do in 6 months and underestimate what they’ll do in 6 years. This is why both the “AGI next year” camp and the “it’s just a chatbot” camp will age badly. Build for the slope of genuine capability growth, not the peak of quarterly discourse.

The Context Greed Law

Work expands to fill the time available, and agent reasoning expands to fill the context window available. Give an agent 200k tokens and it will find a way to use 180k of them on retrieved chunks, scratchpad thoughts, and tool-call history that don’t change the answer. Context budgets, like time budgets, need to be imposed from outside because nothing inside the loop is incentivized to stay small.

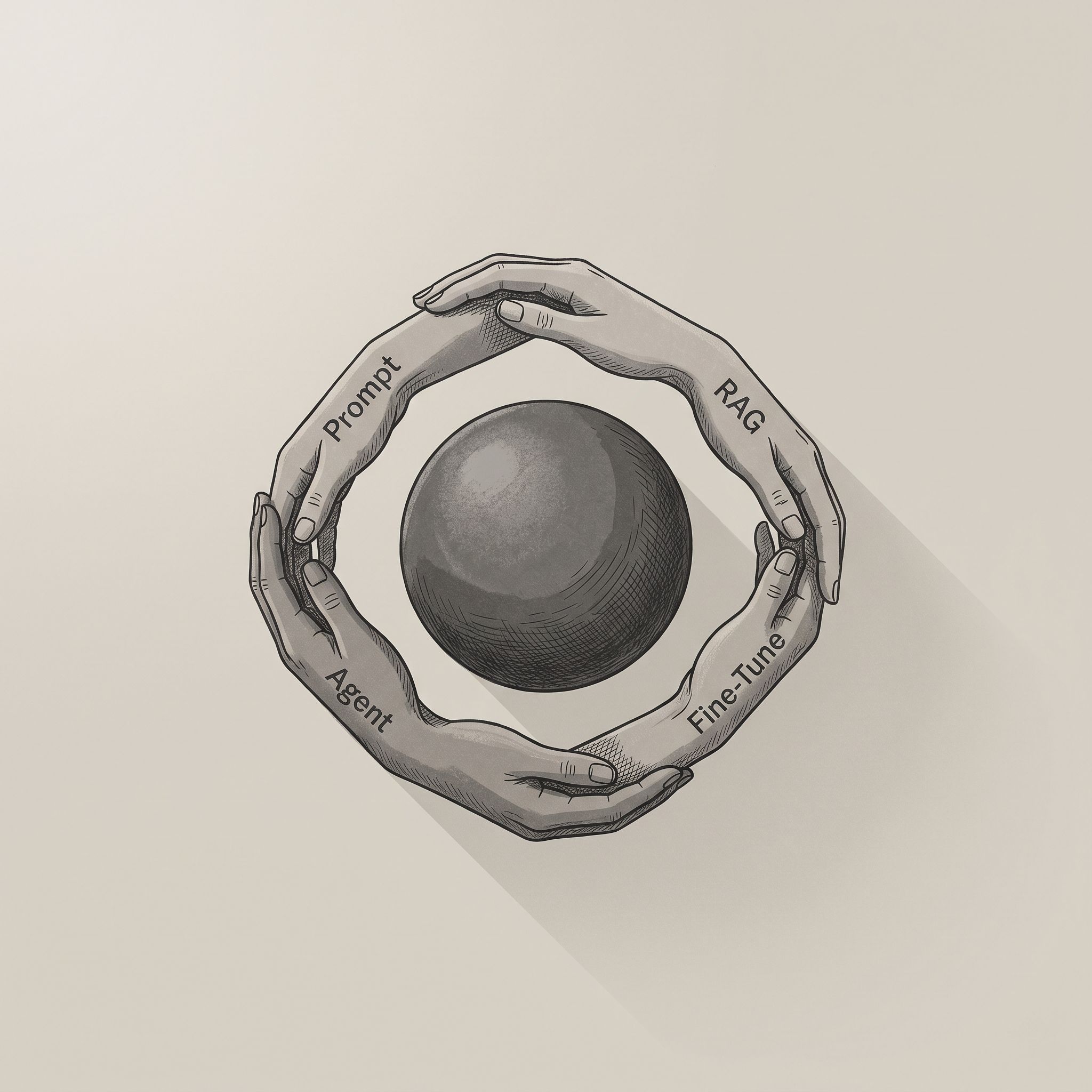

The Irreducible Complexity Law

Language understanding has a floor of complexity that cannot be eliminated, only relocated. You can push it into the prompt, into RAG, into fine-tuning, into tool schemas, into the agent graph, or onto the user’s shoulders via clarifying questions, but the total never goes down. Most “clever” LLM architectures are just complexity-laundering schemes.

The Second-Agent Effect

The team that shipped a solid single-agent v1 almost always over-engineers v2 into a multi-agent hierarchical planner with memory, reflection, critics, and a message bus; it ships later, costs more, and performs worse on the same evals. The working simple system gets mistaken for a stepping stone when it was actually the destination.

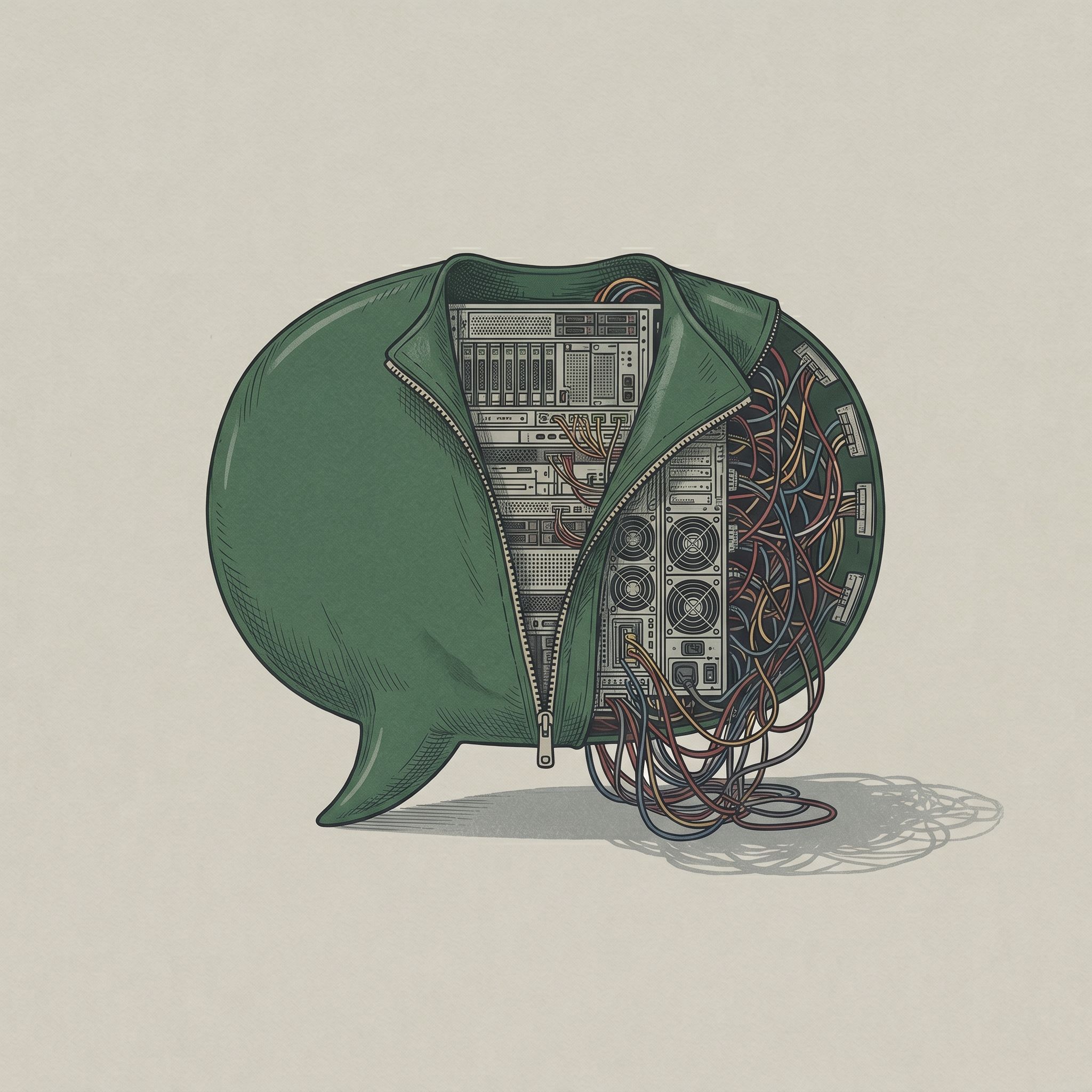

The Fallacies of Agentic Computing

Every agent framework quietly assumes tools are reliable, latency is zero, bandwidth is infinite, the schema is stable, and the API won’t rate-limit you mid-loop. None of these are true, and each false assumption eventually manifests as an agent silently retrying, hallucinating a tool response, or burning tokens in a doom loop. Agents are distributed systems wearing a chatbot costume.

The Robustness Principle for LLMs

Be liberal in what you accept from the model, conservative in what you emit to downstream systems. Assume the JSON will be malformed, the tool call will have an extra field, the answer will occasionally be in Markdown when you asked for plain text. Validate, repair, or reject before anything touches production. The cost of trusting LLM output verbatim is paid in 3am pages.

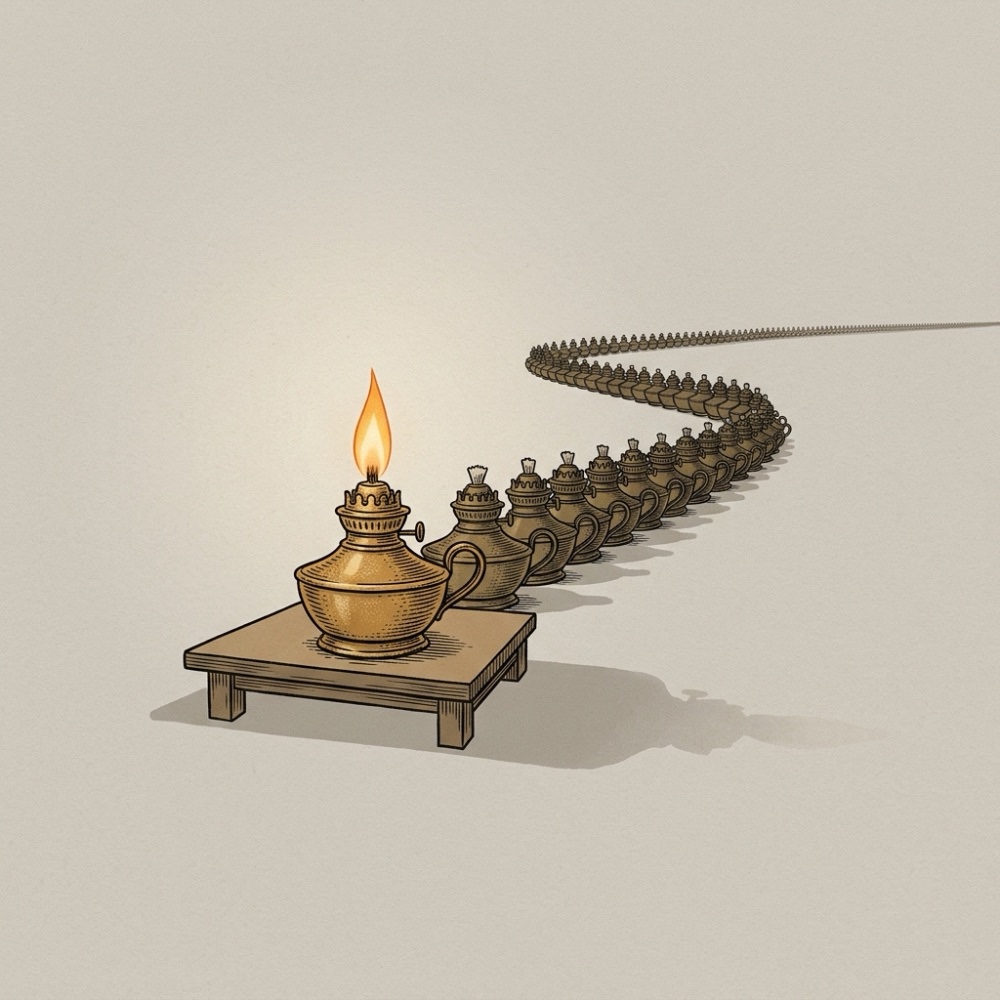

The Cheap-Brain Compute Trap

Every efficiency gain in LLMs, cheaper tokens, faster training, distilled models, better hardware, expands total compute demand rather than shrinking it. When inference drops 10x in cost, it doesn’t free up the GPU fleet; it unlocks a hundred new use cases that were previously uneconomical. Agents that once ran hourly now run per-keystroke. Features that once summarized a document now summarize every paragraph. This is why the AI infrastructure bill keeps growing in lockstep with efficiency breakthroughs: each task gets cheaper, and the world responds by doing exponentially more of them.